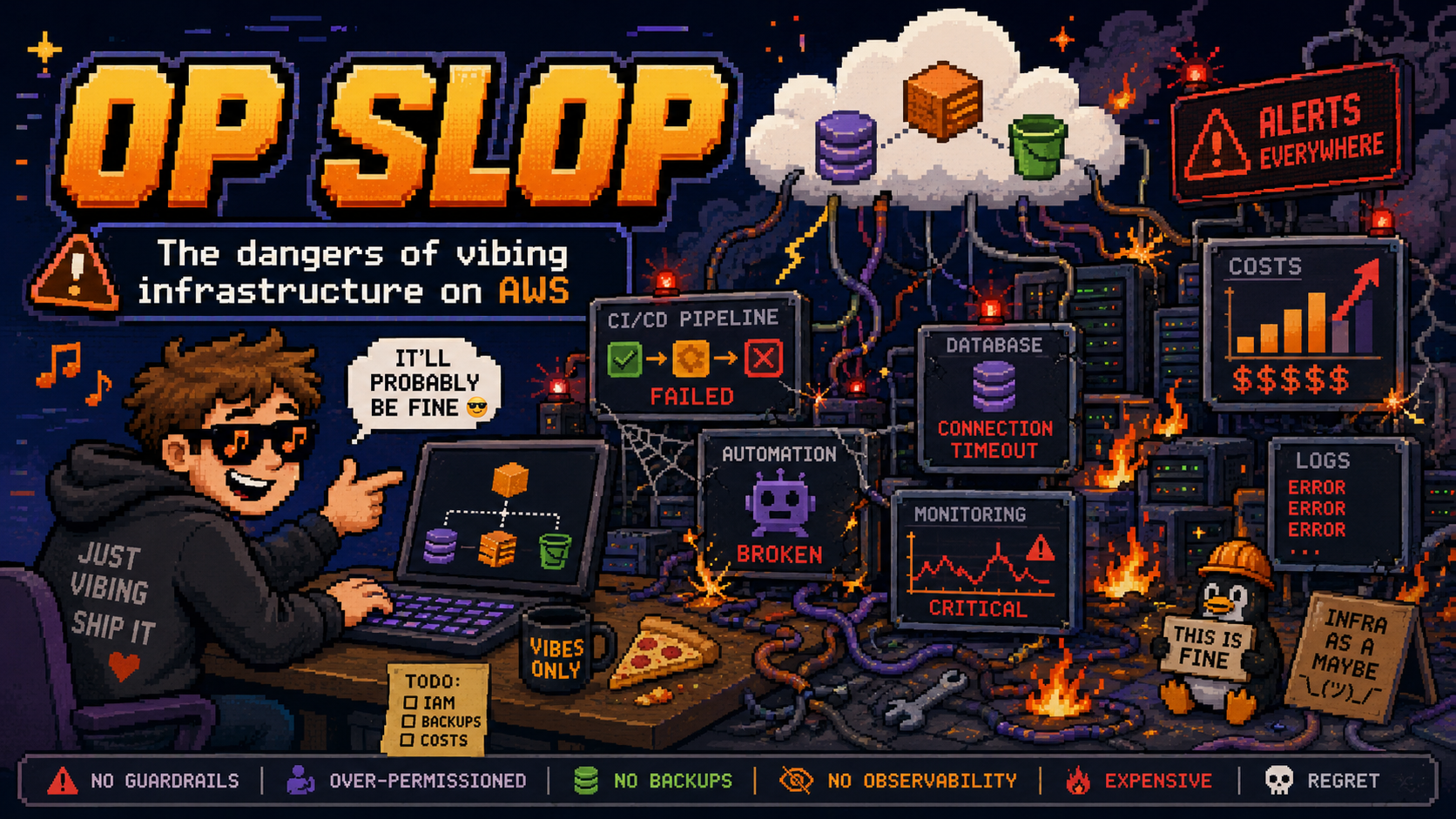

OpSlop and How to Avoid It

AI agents deploying infrastructure directly into your AWS account is ClickOps at machine speed. The Agent Toolkit for AWS shows how to keep agents producing IaC instead.

AI agents deploying infrastructure directly into your AWS account is ClickOps at machine speed. The Agent Toolkit for AWS shows how to keep agents producing IaC instead.

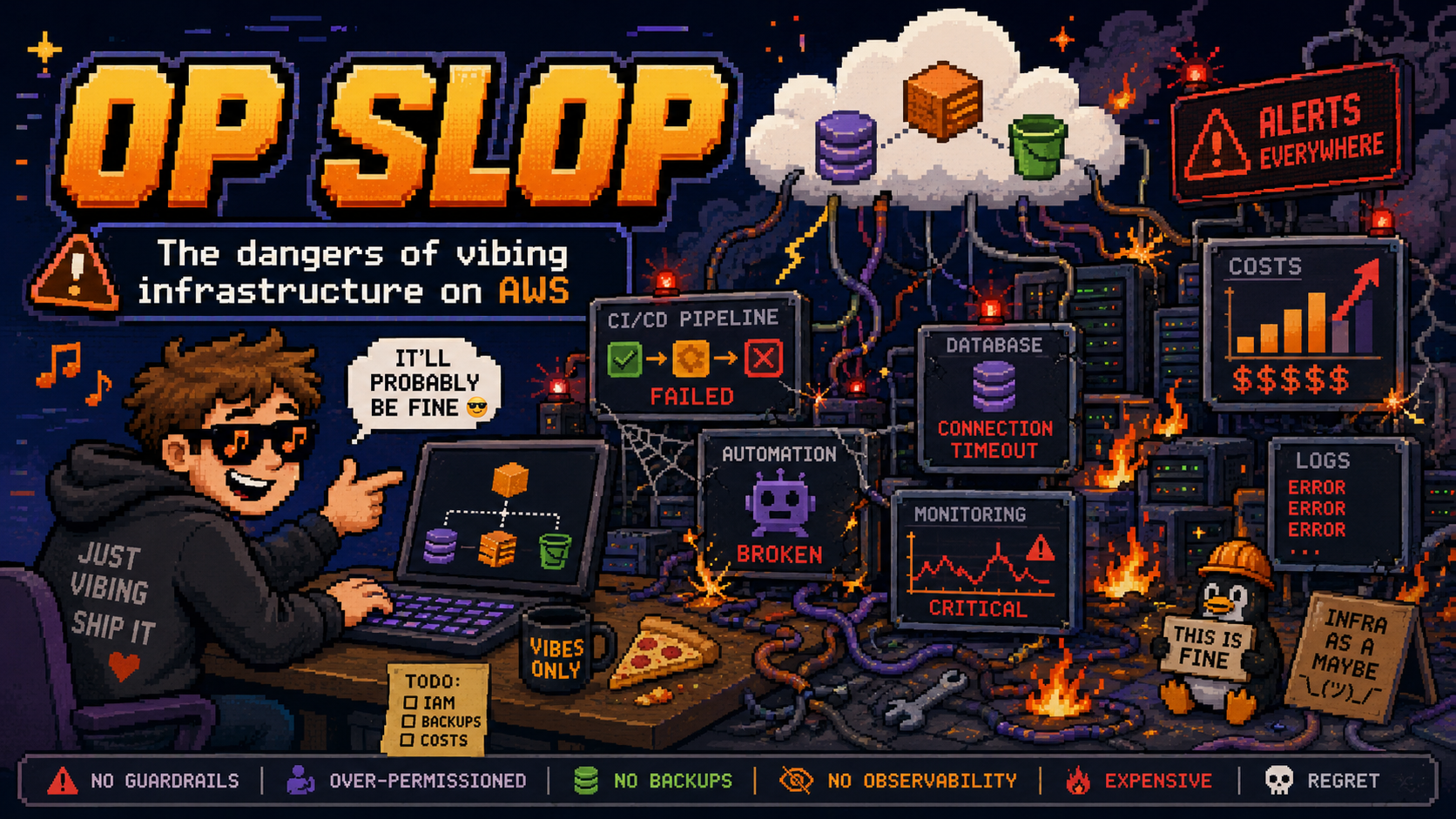

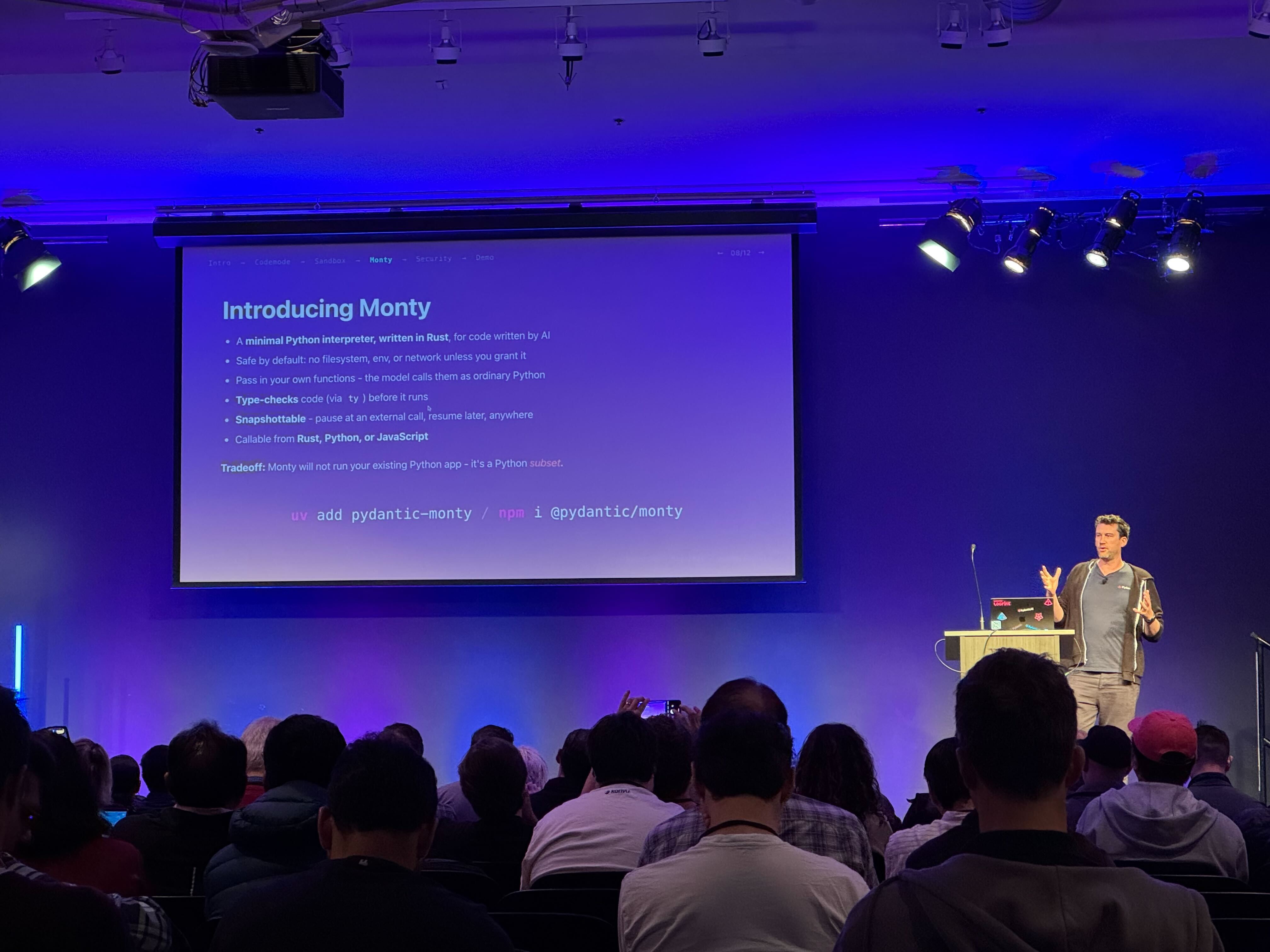

Let LLMs write Python that orchestrates parallel tool calls in a single invocation — Pydantic Monty is the sandboxed interpreter that makes it safe and fast.

I tested 27 models on Amazon Bedrock for em dash usage. Llama produces zero. Claude and Palmyra can't stop. What does that tell us about how LLMs learn style?

My AI agent has access to my email and Slack. Here are four tactics I use to stop it from sending a career-ending message — from system prompts to deterministic hooks, LLM-as-a-judge steering, and Cedar policies at cloud scale.

How to wire together AgentCore Browser, Nova Act, and IAM authentication to build a production browser agent on AWS, with complete working code.

I fine-tuned Qwen2.5-0.5B to always talk like a pirate using LoRA. The first attempt failed because of a system prompt in the training data. Here's what I learned about training data design.

Nine Python agent frameworks, compared honestly. Architecture, code samples, community sentiment, and what actually matters when you're picking one.

Context Hub is a curated documentation registry for coding agents. Here's how to add your API before someone else does.

AI agents, runtime software, and what comes after SaaS.

I joined Romain Jourdan on the AWS Developers Podcast to discuss OpenClaw, async agentic tools, Strands Labs, AI Functions, and the future of software development.